By Squarepusher

It’s been a long time since the last release, and it’s been a long time since any news has been posted period.

The relaunch

So, first things first – yes, there will be a new release soon. I’m conflicted on whether to call it RetroArch 1.0 but I think we might as well get it over with so that we can have some kind of sane versioning from here on out.

We will relaunch on the Google Play Store. We still don’t know why we were pulled from the Play Store the last time, we never got a response from Google telling us the exact reason why they pulled us, and while this all sucks really badly and quite frankly makes me have second thoughts about committing myself too much to Google’s app store, we will try again. This time we have taken a few contingency measures –

- Some GPL purists seem to want the option of being able to only have cores in RetroArch that are GPLv2 and/or GPLv3, but no cores which use proprietary non-commercial licenses. Rumor has it that this even led to RetroArch’s takedown from the Google Play store – the argument being cited was “license violation”. While it’s a quite spurious argument and I would have liked Google to have contacted me first so that I could have at least given my side of the story, we will meet these people half-way this time.

After the app gets installed, a popup disclaimer will show up. This asks you whether to remove the non-GPL licensed cores or whether to keep them. This is a permanent option and the only way to undo this is to get rid of your ‘user settings’ – upon which you get greeted with the same disclaimer again.

- The logo has been redesigned by Agnes Heyer and it’s no longer a bog-standard Space Invaders icon. This is in case the takedown was over some copyright claim over the Space Invaders imagery being used. It’s still an invader, but at least it has a distinctive enough art style now as to make it “different enough”. Agnes also supplied us with some very nice handdrawn art that we’ll be using on the Google Play store.

- Images from games running in RetroArch Android will no longer be on the store listing. Just in case we got pulled over that alone.

Anyway, hopefully this is sufficient to address all possible issues. Let it be said that if we get pulled again after this re-launch and Google doesn’t even have the decency to give us an e-mail explaining why they took it down, that will be it for me – I’m not going to continue republishing on the Play Store just because Google entertains random accusations of “license violations/copyright infringement” of whomever wants the app pulled. Google’s ‘public relations’ is quite frankly atrocious, not to mention insulting and demeaning since publishing apps on the Google Play Store is not free – we had to pay 25 dollars to be granted the “honor” by Google to get people to actually be able to install your app on their phones/tablets. Now granted, this is not yet as bad as Apple, but freedom this most certainly is not. I expect at least some very basic customer support for those 25 dollars instead of some “automated web form” that leads to nowhere.

Libretro/RetroArch going beyond traditional games/emulators

Anyway, with that negativity out of the way, I am very excited to finally uncover what I and others have been working on these past few months during the blackout. I have always maintained that RetroArch and libretro is about ‘more’ than just emulators and game ports, but to date there was not much to show for this claim.

I look at RetroArch not merely as a “frontend” for an application API, but also as a truly crossplatform architecture – something that abstracts input/video/audio/location/camera streams and lets you – the app creator – easily make use of these various streams to create very rich, full-featured applications without having to worry about which platform you’re developing for. Any game and/or emulator out there needs at least input/video/audio streams, and libretro/RetroArch so far has been catering to this need for a good few years now.

However, ever since the Wii and things like Kinect/PS Move, the videogames world is no longer as rigidly defined as it used to be, and some videogames are not even “videogames” anymore. Or, to put it more simply, just like art, nobody knows what a videogame is anymore. Thanks to motion sensors, cameras, and location-based sensors, we can do reasonably cool new things with a traditional 3D videogames engine that we simply weren’t able to do years ago because of the devices simply not having these kinds of hardware features at their disposal, and the infrastructure not being there.This kind of technology allows us to make stuff that can’t easily be shoehorned into pre-existing “game genres” like beat ’em ups, adventure games, RPGs, and so on.

So you’ve got things like augmented reality now establishing themselves thanks to mobile phones and tablets, and you’ve got peripherals like Occulus Rift resurrecting Virtual Reality from that early ’90s grave. You’ve got Sony dabbling with Augmented Reality on PS Vita and trying to get people energized and excited about that platform to no avail. What all these things have in common is that there’s no real easy way for a developer to be able to target all that technology here with ‘one codebase’, ‘one app’, through some ‘common’ interface. If you want to make a game/app utilizing all this stuff for most of the mainstream platforms out there (PC/Android/iOS/etc), you better be ready to invest some serious time into delving into every conceivable API there is and trying to work around insane platform limitations. There’s a big window of opportunity here to create a nice, crossplatform way to tap into all these features without having to resort to big, bloated APIs that all have to be duct-taped together to achieve the desired app.

For instance, if you want to develop a native app for Android right now that utilizes the camera and location services, you can forget about any easy NDK API interfaces being there. It’s all on the Java side and you’re just expected to come up with a big ‘hack’ which of course is not published anywhere. I had a look at the way OpenCV made camera access from the NDK possible and it just was not what I wanted – it was basically a big hack job in native land that would never really be scaleable and would require continuous maintenance. I also didn’t like how I needed to pull in this big monstrous SDK just to be able to access the camera.

So we came up with our own solution for this. It’s a basic JNI shim job and it’s compatible from Android 3.0 up to the latest Android version right now – 4.4.4.2. And it’s very, very fast. You basically pass a GL texture ID from C land to the RetroArch Java frontend side – it uses this ID for binding the camera framebuffer texture, and from there you can do with this camera texture whatever you want from within the confines of your libretro app.

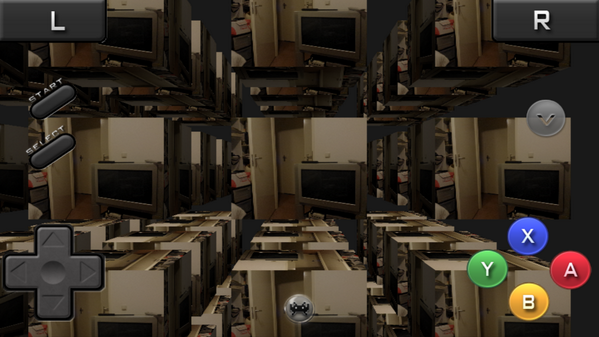

We have made an example core that can instantiate a large amount of cubes with each face showing the camera screen. The only dependencies here are that it’s a libretro GL core, and that it runs from within RetroArch Android. There is no dependency on OpenCV, on any external “native C” camera shim driver library, etc.

The same goes for location APIs – it can’t be accessed from the NDK normally.

RetroArch slowly becoming suitable for AR applications

The big plus point here is that you can write a core utilizing this stuff in a very platform-agnostic way – the only thing you have to worry about is implementing a bunch of callbacks, and RetroArch (the frontend implementing the libretro API) does the rest. You don’t have to worry about the Android camera driver behaving differently from the iOS camera driver and vice versa – you don’t have to worry about Android’s location API or the iOS/OSX location API – that all is handled by the frontend, you don’t need to worry about it. But you can still get access to location data streams and camera streams now all the same from within your GL app – with minimal effort.

Just like with the camera, Proofs-of-Concept have been made that demonstrate both GPS functionality working and camera functionality working. They tick all the boxes that to me define RetroArch – cross-platform, portability, fast, and above all, clean and slimline. The camera works on Linux (Video4Linux2), for the Emscripten WebGL port, iOS, and Android. The location interface has two implementations – one for Android (through the Google Play Services), and an Apple implementation that works for both OSX and iOS (in OSX’s case it should be even backwards compatible from Snow Leopard and up). Portability – in that both the camera and the location functionality gets exposed to the core through the libretro API. So the job of having to implement all this location functionality for every platform is totally outsourced to the frontend side – you don’t even have to think for that matter about your app being “for Android” or “for iOS”. Performance in that the camera driver that has been implemented for both iOS and Android is as fast as it could be. You’re simply binding the camera framebuffer to a GL texture ID and then re-routing that back to the libretro core where you’re able to do with this texture whatever you want – you could turn it into a skybox, or you could render it on top of every cube – and even instance those cubes (2 to the power of 16 no less) while still getting very high performance.

I’m fairly confident that with a few more tests we can easily demonstrate the potential of the libretro API to the outside world as something that is far more significant than simply “something that allows you to port emulators in a cross-platform way”, and I’m quite excited about being very close at realizing that goal. Existing users should not take this as a sign that the stuff they care about – emulators/games – is going to play a diminishing role – this is about being all-inclusive, about creating a big entertainment platform that allows the developer to do whatever he wants his multimedia applications to do.

Emulators

So, where does this leave the emulation side? The stuff most people still care about when it comes to RetroArch? Well, the Mupen64 libretro core has been steadily improving – right now most effort has been focused on improving the Glide64 video plugin. I still would have preferred things to be even more solid at this point but I’d say it is getting close for a first release – people should be aware anyway that this is a continual “Work In Progress” and that improvements will be made on an ad-hoc basis.

Anyway, I can’t quite keep track of all the cores that have been added since, nor do I really know whether it’s wise to dump in the MAME 2013/2010 cores into the main package since the MAME 2013 core alone is 123MB in size. This wouldn’t go down well with a number of users that have complained about RetroArch’s filesize – and the more cores that will get added, the bigger a problem that will be. Perhaps expansion APKs are a temporary solution but eventually I think we will need to have the equivalent of a ‘package manager’ within the app that can pull in cores remotely. I’m not sure who would have to do the hosting though because we already tried that approach once for the Windows port and it turned out to be both unmaintainable and too expensive – server cost-wise.

When the release gets made, I may do a backlog throughout all the updates that have happened this past half year so people have a better overview of exactly is new. Hopefully that time will be soon. As a post-mortem I will follow up the release (when it happens) with a post that covers all the UI improvements (and other improvements) that have been made.

Avuton Olrich

December 22, 2013 — 6:00 am

Consider doing plugin APKs like ScummVM does.

mumchmeither

December 22, 2013 — 2:01 pm

gald to hear about the progress

in regareds to teh size issue why not just provide a base version (little to no cores packaged) wherein the user can manually load their cores of choice AND a full install for those whom size concerns aren;t an issue?

RetroGamer

December 23, 2013 — 12:59 pm

Will it be possible to have better support for gamepads like moga? no matter how much I try, I cannot get it to work with retro arch in actual game play but the menus etc. work with it.

Also, I have a large TV and the games don’t scale well, they look blocky when made to stretch full screen which is expected but there are a few emulators on Google play that scale games pretty well.

And lastly, on some of the emulators like Sega, the sound is terrible when its connected to the TV through HDMI but PlayStation sounds normal.

We know you have put a lot of time and effort in to this project and I hope that others appreciate it as much as some of us do, you’re doing great job guys.

Thanks.

anonymous

December 30, 2013 — 8:16 pm

I really hope you add automatic selected touch screen overlay, it was the only problem of the old retroarch version, and was really bad.

AndresSM

January 7, 2014 — 7:04 am

What do you mean with automatic selected touch screen?

Iriez

January 5, 2014 — 10:09 pm

Hey SquarePusher! Can you reach out to me on IRC? We can discuss the possibility of hosting your APK’s / cores / package manager.

Always willing to help support the cause.

I dont always respond quickly so hang around, but I usually pop in atleast twice a day 🙂

Thanks!

Squarepusher

January 16, 2014 — 1:33 pm

Sure, that could be a good idea.

Natanael L

January 6, 2014 — 10:58 pm

On distribution of the Android app: Get yourself listed on F-Droid! It’s a repository for open source apps. http://f-droid.org

Also, are there going to be multiplayer support on things like Gameboy Advance ROMs? If you could pull off implementing something like WiFi Direct or Bluetooth based multiplayer gaming (you’d need to add a networking API for the emulator cores), it could be possible to play things like Sonic among friends. It would be even cooler if it could work cross-platform over LAN/WLAN so you could play with your Android against somebody on a laptop or console.

AndresSM

January 7, 2014 — 7:10 am

Netplay is a tricky niche feature that doesn’t work most of the time. GBA link is quite sensitive regarding timing as far as I know and before it happens the core needs to support it. I think VBA-M does support GBA-Link I think… hopefully it will happen someday

Natanael L

January 6, 2014 — 11:00 pm

Quick update – looks like your app is on F-Droid already, but it’s not yet updated. You need to get it updated and advertise that option more!

AndresSM

January 7, 2014 — 6:56 am

But what is the advantage of doing that? you can sideload from here just the same.

An idea though.. I’ll add a QR to the download

BenMcLean

January 11, 2014 — 6:36 pm

F-Droid won’t even let you distribute the non-GPL’d cores through their service at all, even with the option to remove them, so I’d recommend against bothering to get on F-Droid for something like this, despite the fact that I like F-Droid and use it for getting GPL apps.

Great work. I love RetroArch! 😀