RetroArch 1.7.8 has just been released! Grab it here.

If you’d like to show your support, consider donating to us. Check here in order to learn more.

It would be an understatement to say that version 1.7.8 is a pretty big deal. Two major landmark features are added for this version on top of numerous enhancements. In fact, there is so much to talk about again, we have been forced to split this release post up into several separate blog articles.

RetroArch AI Project

Welcome to the future! Sometime ago, a RetroArch bounty got posted proposing OCR (Optical Character Recognition) and Text To Speech services being added to RetroArch.

Some months later, and here we are – a bounty hunter valiantly took on the challenge and there is now a fully fledged AI Service up and running that works seamlessly with RetroArch!

You use the AI Service like this – you enable the AI Service (should be enabled by default), you then setup the server URL (could be a local network address if you have the server up and running in your own network, or a public IP/URL in case you’re going through a service). After that, you only need to bind a button or key to the so-called “AI Service” action. You can bind this key by going to Settings – Input – Hotkeys.

In this video, you can see each of the two modes that the AI Service currently is capable of doing –

Speech Mode – Upon pressing the AI Service button, a quick scan is done of the text, and the recognized text is then translated to speech. You can press the AI Service button at any time and it will try to process the current snapshot of the screen it made. This mode is non-interruptable, meaning the game will continue running when you hit this button, and the output speech will take as long as it takes for the server to respond to your query and pipe the sound to RetroArch.

Image Mode – In image mode, it tries to replace the text onscreen with the output text. For instance, in the video you see above, the game is played in Japanese, so when we hit the AI Service button, it tries to replace the Japanese text with English translated text. This mode is interruptable – this means that when you hit the AI Service button, it pauses the game and shows you an image with the replacement text UNTIL you hit either the AI Service hotkey or the Pause hotkey again, then it will continue playing.

We encourage everybody that wants to submit feedback to us on this amazing revolutionary feature to go to our Discord channel and in specific the retroarch-ai channel. We’d love to hear your feedback and we’d like to develop this feature further, so your input and feedback is not only appreciated but necessary!

Read the instructions on how to set this up here.

Also make sure to read our previous blog articles on this subject, available here.

RetroArch Disc Project

Real CD-ROM functionality is now included in RetroArch 1.7.8 for both Linux and Windows PCs. Please note that this functionality is far from finished and the performance you will be able to get out of this right now is very drive and OS-dependent. Generally it’s fair to say that Linux is the more fleshed out of the two platforms so far, and performance and reliability is best there for the moment.

The following cores have been updated with physical CD-ROM support:

- Genesis Plus GX

- Mednafen/Beetle PSX

- Mednafen/Beetle Saturn

- Mednafen/Beetle PCE/Fast

- 4DO

We want to encourage people to test as many drives as possible that they have at their disposal, then report back to us on Discord (channel #discproject).

Also make sure to read our previous blog articles on this subject, available here.

RetroArch Android – now a hybrid 64bit/32bit build

Read this blog article here for more information on this important change. We apologize for the inconvenience but Google’s new store rules force our hand and it was necessary to add the 64bit version to the main release.

To be clear, the only place on which we can provide a 32bit-only version from now on will be our own site. Google’s rules on the Google Play Store requires each app to have both a 64bit and/or 32bit codepath, and on 64bit devices, it would auto default to the 64bit code, with no ability to switch to the 32bit version.

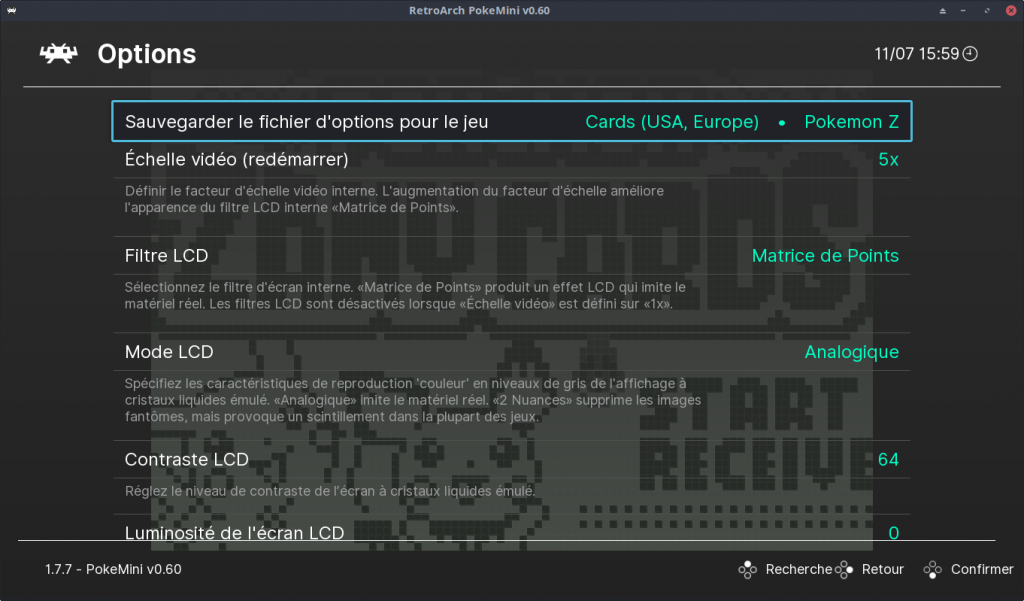

Enhanced core options

A big flaw of the libretro API was the lack of any internationalization support, or even just a sane way to add a default value without needing it to be the first in a sequential list.

Libretro and RetroArch now bode support for enhanced core options. Core options can now have sublabels, and they can be translated to every single language theoretically.

Core options can also be shown and/or hidden now.

Several cores have already received a Turkish translation of their options.

Audio device selection (Windows)

It is now possible to choose between available audio devices with the XAudio2/DirectSound/WASAPI drivers. You do this by going to Settings -> Audio and pressing left and/or right. You can also set audio_device inside your config file to either the index of the device or its actual name.

Multi-touch lightgun controls (Android/iOS)

With RetroArch 1.7.8, it is be possible to use your fingers as a lightgun on iOS and Android. Not only that, but it supports multi-touch too! (iPhone XS Max shown in this video) What you see here in this video is a demonstration of all the cores that include support for this new feature. The device being used here is an iPhone XS Max, and it’s plenty powerful enough to even run the likes of Mednafen/Beetle Saturn! Here is a list of the cores so far that support this (along with the systems they support):

- NES/Famicom (FCEUmm)

- Super Nintendo/Super Famicom (Snes9x)

- Sega Master System (Genesis Plus GX)

- Sega Megadrive/Genesis (Genesis Plus GX)

- Sony Playstation (Mednafen/Beetle PSX)

- Sega Saturn (Mednafen/Beetle Saturn) 7. Arcade (MAME)

Playlist Thumbnails Updater

This PR adds a new entry to the online updater: Playlist Thumbnails Updater

This opens a menu that displays all existing playlists. When one is selected, each entry of the playlist is scanned, and any missing thumbnails are downloaded.

This is a lighter alternative to using the huge thumbnail zip archives, and it should work on consoles etc. that have limited RAM. (It also saves disk space, since we only download what we’re going to use).

On-demand thumbnail downloading

This will auto-scrape thumbnails for a game when the user hovers over it inside a playlist. You can enable this option inside Online Updater and/or Settings – Network. NOTE: We have disabled this by default since this does do a HTTP request for every playlist entry that does not have thumbnails installed already.

Shader Usability Changes

Some bigger and some smaller changes have been made to shaders and the shader menu

You’ll notice the shader menu was tidied up a bit, all the save options are now in one “Save” submenu and new “Remove” options for auto-loading presets were added.

There’s a new option for a “global” auto-loading preset which, like you can imagine, applies to all content you load.

If you remember, shaders were actually saved automatically once they were loaded in the shader menu.

This had a lot of drawbacks, for example, it wasn’t really possible to have content which doesn’t use any shaders at all without having to turn shaders off completely.

It also meant that any content without any auto-loading preset would use the last menu-loaded shader, which was very unintuitive.

This is why shaders are now only saved manually, giving more control over what content uses which shader.

Under the hood, auto-loading presets have a new trick up their sleeves: the `#reference` directive

In practical terms, auto-loading presets can point to other presets, so if you load any shader preset or save it via ‘Save As’ and then immediately save it as an auto-loading preset, it will point to the original preset.

This is especially useful for presets you built yourself and are still tweaking, because you don’t have to resave them as auto-loading presets every time.

Saved shader presets now use relative paths, which make them portable across systems, allowing for easy sharing of your custom presets. Beware though: If you want to move presets into subfolders, the relative paths need to be readjusted as well.

If that was not enough, there is a also new `–set-shader` command line option, which works like an override for auto-loading presets.

With all these additions came one removal, which is the `video_shader` setting, because it didn’t really fit in into how auto-loading presets worked.

This is the basic gist of all the shader changes.

-

The online documentation has been updated accordingly, you can find the new shader user guide here: https://docs.libretro.com/guides/shaders/

Miscellaneous

Read our CHANGELOG here.

- New behavior for Escape key on keyboards – previously, pressing the Escape key would default to immediately exiting the program. Now, you need to press Escape (or any other key bound to “Quit RetroArch”) twice in order to quit. If you dislike this new behavior, go to Settings – Input and turn off ‘Press quit twice’.

- If the user selects a core that requires a different video driver than the one he is currently using (for instance, the user is using the Direct3D 11 driver while trying to start a core that requires OpenGL), it will now warn the user about this after failing to load the core.

- The following features have been enabled by default:

- Per-content playtime logging – Last Played, How many hours/minutes played, etc.

- Playlist sublabels, showing more information about each playlist entry.

- Some refinements to the core loading system – after a core is loaded, it won’t show ‘Quick Menu’ until the core is actually running.

- More refinements – when a core is running, the ‘Load Core’ option is hidden. The user needs to first select ‘Close Content’ before he has the ability to select another core from ‘Load Core’ again. This should lead to an overall stability increase in the program.

- Ability to hand pick which settings categories get shown (Settings -> User Interface -> Views).

- Windows/Linux/Mac: Better resizing of the menu graphics when resizing the window in windowed mode.

- XMB: New settings that allow you to choose between a variety of fancy menu animation effects! Animation Horizontal Highlight, Animation Move Up/Down, Animation Main Menu Opens/Closes.