Hot on the heels of our Beetle PSX dynarec public beta release, here comes another bombshell, this time targeting that other big 5th generation console, the Nintendo 64.

ParaLLel N64 is back with a vengeance, and this time Parallel RSP coupled with Angrylion Plus RDP is the enabling technology for a big performance jump in low-level accurate N64 emulation.

Parallel RSP

We have released today a new version of Parallel N64 for Windows and Linux that adds back the Parallel RSP dynarec. This together with multithreaded Angrylion makes Parallel N64 the fastest LLE N64 emulator by far.

We have also added missing bits and pieces to Parallel RSP that allows for several games to work, such as World Driver Championship, Stunt Racer 64, and Gauntlet Legends. On top of that, we also optimized some parts in Angrylion RDP Plus which resulted in greatly enhanced core/thread utilization on a 16-core Ryzen CPU. It should also similarly scale well downwards.

Just to illustrate how dramatic of a difference all these performance-focused enhancements make: on an underpowered 2012 Core i5 laptop, the following games are capable of being played at fullspeed: Resident Evil 2, Mario Kart 64, 1080 Snowboarding, Doom 64, Quake 64, Mischief Makers, Bakuretsu Muteki Bangaioh, Bust-A Move 2 Arcade Edition, Kirby 64 – The Crystal Shards, Mortal Kombat Trilogy, Forsaken 64, Harvest Moon 64.

A 2012 Core i5 desktop CPU like the Core i5 3570k should be more than capable enough of playing the vast majority of N64 games at fullspeed with Angrylion+Parallel RSP, with only rare exceptions like Star Wars Episode 1 Racer being too much for it.

Dramatically lowering the performance ceiling like this has vast implications for the viability of low-level accurate N64 emulation, and opens the door for more people to enjoy bug-free N64 emulation, previously the preserve of only the most overpowered PCs.

Right now, only Linux and Windows are able to enjoy the benefits of Parallel RSP due to the LLVM dependency. This might solve itself later on, though.

How to get it

On Windows: Just downloaded the latest Parallel N64 core from RetroArch’s Online Updater menu. You can either download it from Core Updater, or you can select ‘Update Installed Cores’ if you already had a prior version of Parallel N64 installed.

On Linux: Download the latest Parallel N64 core from RetroArch’s Online Updater menu. You can either download it from Core Updater, or you can select ‘Update Installed Cores’ if you already had a prior version of Parallel N64 installed.

IMPORTANT: On Linux, the situation is a bit more complicated. If you find that the core won’t load on your Linux distribution, it is because LLVM on Linux normally links against libtinfo. For the version of Parallel N64, you will need to make sure you have libtinfo5 installed.

On Ubuntu Linux, you can do this by going into the terminal and typing in the following:

sudo apt-get install libtinfo5

Consult the documentation of your Linux distribution’s package manager for more details on how you can download this package. Once installed, the core should work. If you have a more recent version of libtinfo already installed, creating a symlink for libinfo5.so might be enough.

How to use it

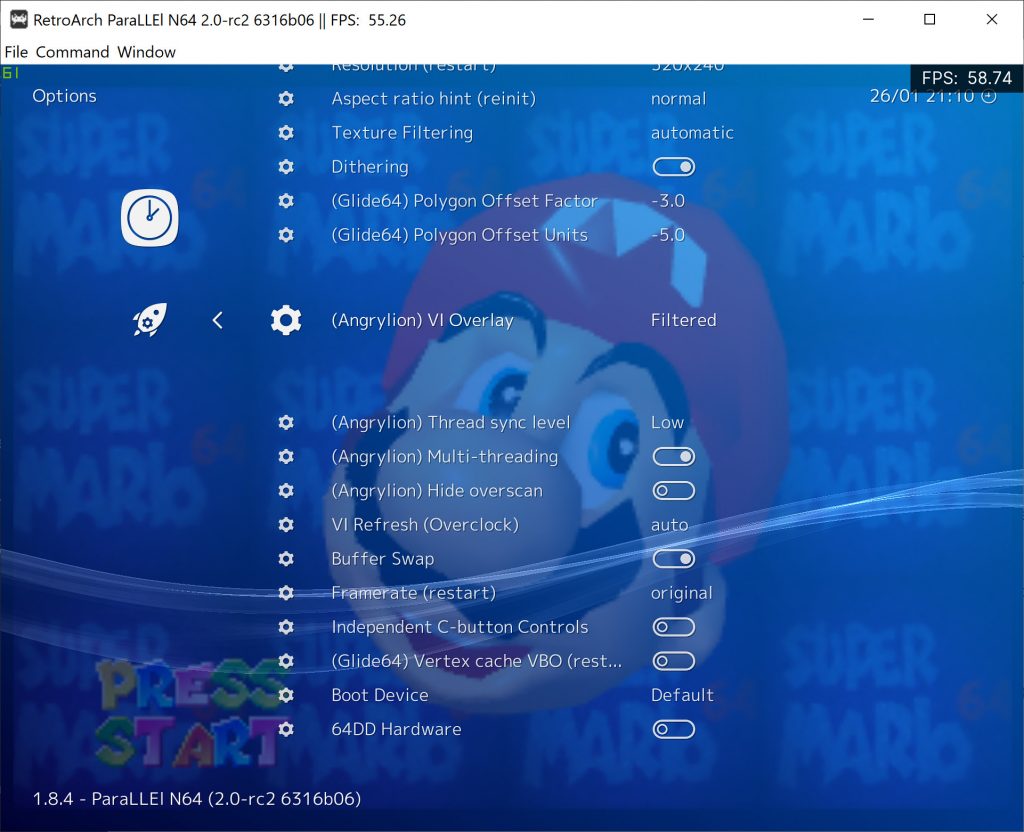

Go into Quick Menu -> Options.

Make sure that ‘GFX Plugin’ is set to ‘angrylion’, and ‘RSP Plugin’ is set to ‘parallel’.

Restart the core.

Explanation of the options

Angrylion (VI Mode) – The N64’s video output interface ‘VI’ would postprocess the image in a very elaborate way. We will explain all of the modes down below:

VI Filter: – All VI filtering is being applied with no omissions.

VI Filter – AA+Blur – Only anti-aliasing + blur filtering by the VI is being applied.

VI Filter – AA+DeDither – Only anti-aliasing + dedithering filtering by the VI is being applied.

VI Filter – AA Only – Only anti-aliasing by the VI is being applied.

Unfiltered: – This is the fastest mode. There is no VI filtering being applied of any sort, the color buffer is being directly output.

Depth: – The VI’s depth buffer as a grayscale image

Coverage: – Coverage as a grayscale image

Angrylion (Thread sync level) – With most games it is fine to leave this at ‘Low’. Low will be the fastest, with High being significantly slower. Games which need Thread sync level set to High to prevent rendering glitches include but are not limited to Starcraft 64, Conker’s Bad Fur Day and Paper Mario.

Angrylion (Multi-threading) – You will want to always enable this. When it is disabled, Angrylion will be entirely single-threaded. It will be much slower in single-threaded mode.

Increased compatibility for Parallel RSP

The following games will now work with Parallel RSP:

* World Driver Championship

* Gauntlet Legends

* Stunt Racer 64

* Mario no Photopie (graphics fixed)

Performance tests

NOTE: All these tests were performed with the game Super Mario 64 (USA). Exact scene being tested can be seen in the screenshot down below.

* Cxd4 means the Interpreter RSP core. This was previously the only option you could use in combination with Angrylion – it needs an LLE RSP core, it cannot work with a HLE RSP core.

* All the VI Filter tests are done with Thread Sync Low.

Test hardware: Desktop PC – AMD Ryzen 9 3950x, Windows 10 (16 cores, 32 HW threads)

| RSP Mode | VI Filter | VI Filter – AA+Blur | VI Filter – AA+DeDither | VI Filter – AA Only | Unfiltered – Thread Sync Low | Unfiltered – Thread Sync Mid | Unfiltered – Thread Sync High |

|---|---|---|---|---|---|---|---|

| Cxd4 | 174 VI/s | 176 VI/s | 175 VI/s | 178 VI/s | 180 VI/s | 175 VI/s | 92 VI/s |

| Parallel RSP | 235 VI/s | 238 VI/s | 238 VI/s | 240 VI/s | 245 VI/s | 235 VI/s | 106 VI/s |

Test hardware: Desktop PC – Intel Core i7 7700k @ 4.2GHz, Windows 10 (4 cores, 8 HW threads)

| RSP Mode | VI Filter | VI Filter – AA+Blur | VI Filter – AA+DeDither | VI Filter – AA Only | Unfiltered – Thread Sync Low | Unfiltered – Thread Sync Mid | Unfiltered – Thread Sync High |

|---|---|---|---|---|---|---|---|

| Cxd4 | 95 VI/s | 96 VI/s | 96 VI/s | 99 VI/s | 104 VI/s | 96 VI/s | 86 VI/s |

| Parallel RSP | 139 VI/s | 145 VI/s | 144 VI/s | 151 VI/s | 170 VI/s | 146 VI/s | 121 VI/s |

Test hardware: Desktop PC – Intel Core i5 3570k @ 4GHz, Windows 7 x64 (4 cores, 4 HW threads)

| RSP Mode | VI Filter | VI Filter – AA+Blur | VI Filter – AA+DeDither | VI Filter – AA Only | Unfiltered – Thread Sync Low | Unfiltered – Thread Sync Mid | Unfiltered – Thread Sync High |

|---|---|---|---|---|---|---|---|

| Cxd4 | 89 VI/s | 92 VI/s | 91 VI/s | 95 VI/s | 103 VI/s | 103 VI/s | 97 VI/s |

| Parallel RSP | 118 VI/s | 124 VI/s | 121 VI/s | 128 VI/s | 145 VI/s | 145 VI/s | 133 VI/s |

Test hardware: Laptop PC – Intel Core i5 3210M @ 2.50GHz, Ubuntu Linux 19.04 (2 cores, 4 HW threads)

This is a 2012 Lenovo G580 laptop.

| RSP Mode | VI Filter | VI Filter – AA+Blur | VI Filter – AA+DeDither | VI Filter – AA Only | Unfiltered – Thread Sync Low | Unfiltered – Thread Sync Mid | Unfiltered – Thread Sync High |

|---|---|---|---|---|---|---|---|

| Cxd4 | 39 VI/s | 39 VI/s | 42 VI/s | 41 VI/s | 43 VI/s | 39 VI/s | 39 VI/s |

| Parallel RSP | 52 VI/s | 55 VI/s | 54 VI/s | 58 VI/s | 67 VI/s | 66 VI/s | 61 VI/s |

We recommend for a configuration as low-end as this that you just keep VI Overlay to ‘Unfiltered’.

The majority of games on a configuration this low-end experience dips below full-speed, however, there are a fair few games right now which already run at fullspeed. This is by no means a definitive or exhaustive list but here’s the ones for which I can confirm run at fullspeed with very rare dips if at all:

Resident Evil 2, Mario Kart 64, 1080 Snowboarding, Doom 64, Quake 64, Mischief Makers, Bakuretsu Muteki Bangaioh, Bust-A Move 2 Arcade Edition, Kirby 64 – The Crystal Shards, Mortal Kombat Trilogy, Forsaken 64, Harvest Moon 64

Currently known issues

* Do not use ‘Sync to Exact Content Framerate’ for Angrylion + Parallel RSP. You won’t get good results.

* Recompiling blocks the first time can lead to a very slight stutter. Thankfully this only happens the first time, and never happens afterwards for the runtime duration.

* On Linux there might be dependency issues related to libtinfo5. Read the section ‘How to Get It’ where we try to explain how to solve this problem at least for Ubuntu Linux. NOTE: This situation might resolve itself in the future in case we move away from LLVM for the RSP part.