Over the last few months after completing the paraLLEl-RSP rewrite to a Lightrec based recompiler, I’ve been plugging away on a project which I had been putting off for years, to implement the N64 RDP with Vulkan compute shaders in a low-level fashion. Every design of the old implementation has been scrapped, and a new implementation has arisen from the ashes. I’ve learned a lot of advanced compute techniques, and I’m able to use far better methods than I was ever able to use back in the early days. This time, I wanted to do it right. Writing a good, accurate software renderer on a massively parallel architecture is not easy and you need to rethink everything. Serial C code will get you nowhere on a GPU, but it’s a fun puzzle, and quite rewarding when stuff works.

The new implementation is a standalone repository that could be integrated into any emulator given the effort: https://github.com/Themaister/parallel-rdp. For this first release, I integrated it into parallel-n64. It is licensed as MIT, so feel free to integrate it in other emulators as well.

Why?

I wanted to prove to myself that I could, and it’s … a little fun? I won’t claim this is more than it is. 🙂

Chasing bit-exactness

The new implementation is implemented in a test-driven way. The Angrylion renderer is used as a reference, and the goal is to generate the exact same output in the new renderer. I started writing an RDP conformance suite. Here, we generate RDP commands in C++, run the commands across different implementations, and compare results in RDRAM (and hidden RDRAM of course, 9-bit RAM is no joke). To pass, we must get an exact match. This is all fixed-point arithmetic, no room for error! I’ve basically just been studying Angrylion to understand what on earth is supposed to happen, and trying to make sense of what the higher level goal of everything is. In LLE, there’s a lot of weird magic that just happens to work out.

I’m quite happy with where I’ve ended up with testing and seeing output like this gives me a small dopamine shot before committing:

…

122/163 Test #122: rdp-test-interpolation-color-texture-ci4-tlut-ia16 ………………………….. Passed 2.50 sec

Start 123: rdp-test-interpolation-color-texture-ci8-tlut-ia16

123/163 Test #123: rdp-test-interpolation-color-texture-ci8-tlut-ia16 ………………………….. Passed 2.40 sec

Start 124: rdp-test-interpolation-color-texture-ci16-tlut-ia16

124/163 Test #124: rdp-test-interpolation-color-texture-ci16-tlut-ia16 …………………………. Passed 2.37 sec

Start 125: rdp-test-interpolation-color-texture-ci32-tlut-ia16

125/163 Test #125: rdp-test-interpolation-color-texture-ci32-tlut-ia16 …………………………. Passed 2.45 sec

Start 126: rdp-test-interpolation-color-texture-2cycle-lod-frac

126/163 Test #126: rdp-test-interpolation-color-texture-2cycle-lod-frac ………………………… Passed 2.51 sec

Start 127: rdp-test-interpolation-color-texture-perspective

127/163 Test #127: rdp-test-interpolation-color-texture-perspective ……………………………. Passed 2.50 sec

Start 128: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac

128/163 Test #128: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac ……………… Passed 3.29 sec

Start 129: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-sharpen

129/163 Test #129: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-sharpen ………. Passed 3.26 sec

Start 130: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-detail

130/163 Test #130: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-detail ……….. Passed 3.48 sec

Start 131: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-sharpen-detail

131/163 Test #131: rdp-test-interpolation-color-texture-perspective-2cycle-lod-frac-sharpen-detail … Passed 3.26 sec

Start 132: rdp-test-texture-load-tile-16-yuv…

151/163 Test #151: vi-test-aa-none …………………………………………………………. Passed 21.19 sec

Start 152: vi-test-aa-extra-dither-filter

152/163 Test #152: vi-test-aa-extra-dither-filter ……………………………………………. Passed 48.77 sec

Start 153: vi-test-aa-extra-divot

153/163 Test #153: vi-test-aa-extra-divot …………………………………………………… Passed 64.29 sec

Start 154: vi-test-aa-extra-dither-filter-divot

154/163 Test #154: vi-test-aa-extra-dither-filter-divot ………………………………………. Passed 65.90 sec

Start 155: vi-test-aa-extra-gamma

155/163 Test #155: vi-test-aa-extra-gamma …………………………………………………… Passed 48.28 sec

Start 156: vi-test-aa-extra-gamma-dither

156/163 Test #156: vi-test-aa-extra-gamma-dither …………………………………………….. Passed 48.18 sec

Start 157: vi-test-aa-extra-nogamma-dither

157/163 Test #157: vi-test-aa-extra-nogamma-dither …………………………………………… Passed 47.56 sec…

100% tests passed, 0 tests failed out of 163 #feelsgoodman

Ideally, if someone is clever enough to hook up a serial connection to the N64, it might be possible to run these tests through a real N64, that would be interesting.

I also fully implemented the VI this time around. It passes bit-exact output with Angrylion in my tests and there is a VI conformance suite to validate this as well. I implemented almost the entire thing without even running actual content. Once I got to test real content and sort out the last weird bugs, we get to the next important part of a test-driven development workflow …

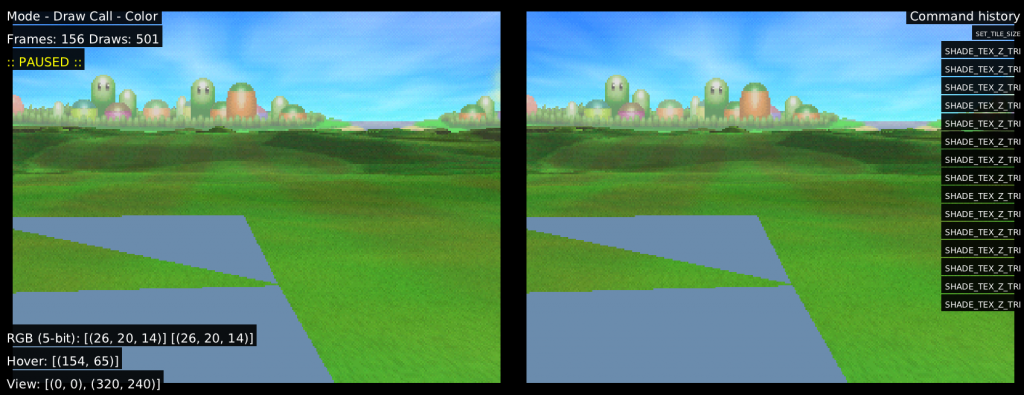

The importance of dumping formats

A critical aspect of verifying behavior is being able to dump RDP commands from the emulator and replay them.

On the left I have Angrylion and on the right paraLLEl-RDP running side by side from a dump where I can step draw by draw, and drill down any pesky bugs quite effectively. This humble tool has been invaluable. The Angrylion backend in parallel-n64 can be configured to generate dumps which are then used to drill down rendering bugs offline.

Compatibility

The compatibility is much improved and should be quite high, I won’t claim its perfect, but I’m quite happy with it so far. We went through essentially all relevant titles during testing (just the first few minutes), and found and fixed the few issues which popped up. Many games which were completely broken in the old implementation now work just fine. I’m fairly confident that those bugs are solvable this time around though if/when they show up.

Implementation techniques

With Vulkan in 2020 I have some more tools in my belt than was available back in the day. Vulkan is a quite capable compute API now.

Enforcing RDRAM coherency

A major pain point of any N64 emulator is the fact that RDRAM is shared for the CPU and RDP, and games sure know how to take advantage of this. This creates a huge burden on GPU-accelerated implementations as we now have to ensure full coherency to make it accurate. Most HLE emulators simply don’t care or employ complicated heuristics and workarounds, and that’s fine, but it’s not good enough for LLE.

In the previous implementation, it would try to do “framebuffer manager” techniques similar to HLE emulators, but this was the wrong approach and lead to a design which was impossible to fix. What if … we just import RDRAM as buffer straight into the Vulkan driver and render to that, wouldn’t that be awesome? Yes … yes, it would be, and that’s what I did. We have an obscure, but amazing extension in Vulkan called VK_EXT_external_memory_host which lets me import RDRAM from the emulator straight into Vulkan and render to it over the PCI-e bus. That way, all framebuffer management woes simply disappear, I render straight into RDRAM, and the only thing left to do is to handle synchronization. If you’re worried about rendering over the PCI-e bus, then don’t be. The bandwidth required to write out a 320×240 framebuffer is absolutely trivial especially considering that we’re doing …

Tile-based rendering

The last implementation was tile-based as well, but the design is much improved. This time around all tile binning is done entirely on the GPU in parallel, using techniques I implemented in https://github.com/Themaister/RetroWarp, which was the precursor project for this new paraLLEl-RDP. Using tile-based rendering, it does not really matter that we’re effectively rendering over the PCI-e bus as tile-based rendering is extremely good at minimizing external memory bandwidth. Of course, for iGPU, there is no (?) external PCI-e bus to fight with to begin with, so that’s nice!

Ubershaders with asynchronous pipeline optimization

The entire renderer is split into a very small selection of Vulkan GLSL shaders which are precompiled into SPIR-V. This time, I take full advantage of Vulkan specialization constants which allow me to fine-tune the shader for specific RDP state. This turned out to be an absolute massive win for performance. To avoid the dreaded shader compilation stutter, I can always fallback to a generic ubershader while pipeline is being compiled which is slow, but works for any combination of state. This is a very similar idea to what Dolphin pioneered for emulation a few years ago.

8/16-bit integer support

Memory accesses in the RDP are often 8 or 16 bits, and thus it is absolutely critical that we make use of 8/16-bit storage features to interact directly with RDRAM, and if the GPU supports it, we can make use of 8 and 16-bit arithmetic as well for good measure.

Async compute

Async compute is critical as well, since we can make the async compute queue high priority and ensure that RDP shading work happens with very low latency, while VI filtering and frontend shaders can happily chug along in the fragment/graphics queue. Both AMD and NVIDIA now have competent implementations here.

GPU-driven TMEM management

A big mistake I made previously was doing TMEM management in CPU timeline, this all came crashing down once we needed framebuffer effects. To avoid this, all TMEM uploads are now driven by the GPU. This is probably the hairiest part of paraLLEl-RDP by far, but I have quite a lot of gnarly tests to test all the relevant corner cases. There are some true insane edge cases that I cannot handle yet, but the results created would be completely meaningless to any actual content.

Performance

To talk about FPS figures it’s important to consider the three major performance hogs in a low-level N64 emulator, the VR4300 CPU, the RSP and finally the RDP. Emulating the RSP in an LLE fashion is still somewhat taxing, even with a dynarec (paraLLEl-RSP) and even if I make the RDP infinitely fast, there is an upper bound to how fast we can make the emulator run as the CPU and RSP are still completely single threaded affairs. Do keep that in mind. Still, even with multithreaded Angrylion, the RDP represents a quite healthy chunk of overhead that we can almost entirely remove with a GPU implementation.

GPU bound performance

It’s useful to look at what performance we’re getting if emulation was no constraint at all. By adding PARALLEL_RDP_BENCH=1 to environment variables, I can look at how much time is spent on GPU rendering.

Playing on an GTX 166o Ti outside the castle in Mario 64:

[INFO]: Timestamp tag report: render-pass

[INFO]: 0.196 ms / frame context

[INFO]: 0.500 iterations / frame context

We’re talking ~0.2ms on GPU to render one frame on average, hello theoretical 5000 VI/s … Somewhat smaller frame times can be observed on my Radeon 5700 XT, but we’re getting frame rates so ridiciously high they become meaningless here. We’ve tested it on quite old cards as well and the difference in FPS on even something ancient like an R9 290x card and a 2080 Ti is minimal since the time now spent in RDP rendering is completely irrelevant compared to CPU + RSP workloads. We seem to be getting about a 50-100% uplift in FPS, which represents the shaved away overhead that the CPU renderer had. Hello 300+ VI/s!

Unfortunately, Intel iGPU does not fare as well, with an overhead high enough that it does not generally beat multithreaded Angrylion running on CPU. I was somewhat disappointed by this, but I have not gone into any real shader optimization work. My early analysis suggests extremely poor occupancy and a ton of register spilling. I want to create a benchmark tool at some point to help drill down these issues down the line.

It would be interesting to test on the AMD APUs, but none of us have the hardware handy sadly 🙁

Synchronous vs Asynchronous RDP

There are two modes for the RDP. In async mode, the emulation thread does not wait for the GPU to complete rendering. This improves performance, at the cost of accuracy. Many games unfortunately really rely on the unified memory architecture of the N64. The default option is sync, and should be used unless you have a real need for speed, or the game in question does not need sync.

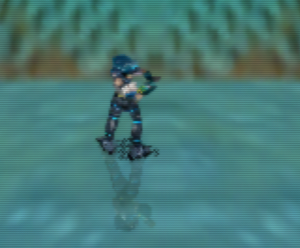

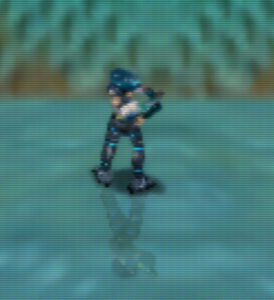

Here we see an example of broken blob shadows caused by async RDP in Jet Force Gemini. This happens because the CPU is actually reading the shadowmap rendered by the RDP, and blurring it on the CPU timeline (why on earth the game would do that is another question), then reuploading it to the RDP. These kinds of effects require very tight sync between CPU and GPU and comes up in many games. N64 is particularly notorious for these kinds of rendering challenges.

Of course, given how fast the GPU implementation is on discrete GPUs, sync mode does not really pose an issue. Do note that since we’re using async compute queues here, we are not stalling on frontend shading or anything like that. The typical stall times on the CPU is in the order of 1 ms per frame, which is very acceptable. That includes the render thread doing its thing, submitting that to GPU, getting it executed and coming back to CPU, which has some extra overhead.

Road-map for future improvement

I believe this is solid enough for a first release, but there are further avenues for improvement.

Figure out poor performance on Intel iGPU

There is something going on here that we should be able to improve.

Implement a workaround for implementations without VK_EXT_external_memory_host (EDIT: Now implemented as of 2020-05-18)

Unfortunately there is one particular driver on desktop which doesn’t support this, and that’s NVIDIA on Linux (Windows has been supported since 2018 …). Hopefully this gets implemented soon, but we will need a fallback. This will get ugly since we’ll need to start shuffling memory back and forth between RDRAM and a GPU buffer. Hopefully the async transfer queue can help make this less painful. It might also open up some opportunities for mobile, which also don’t implement this extension as we speak. There might also be incentives to rewrite some fundamental assumptions in the N64 emulator plugin specifications (can we please get rid of this crap …). If we can let the GPU backend allocate memory, we don’t need any fancy extension, but that means uprooting 20 years of assumptions and poking into the abyss … Perhaps a new implementation can break new ground here (hi @ares_emu!).

EDIT: This is now done! Takes a 5-10% performance hit in sync mode, but the workaround works quite well. A fine blend of masked SIMD moves, a writemask buffer, and atomics …

Internal upscaling?

It is rather counter-intuitive to do upscaling in an LLE emulator, but it might yield some very interesting results. Given how obscenely fast the discrete GPUs are at this task, we should be able to do a 2x or maybe even 4x upscale at way-faster-than-realtime speeds. It would be interesting to explore if this lets us avoid the worst artifacts commonly associated with upscaling in HLE.

Fancier deinterlacer?

Some N64 content runs at 480i, and we can probably spare some GPU cycles running a fancier deinterlacer 😉

Esoteric use cases?

PS1 wobbly polygon rendering has seen some kind of resurgence in the last years in the indie scene, perhaps we’ll see the same for the fuzzy N64 look eventually. With paraLLEl-RDP, it should be possible to build a rendering engine around a N64-lookalike game. That would be cool to see.

Conclusion

This is a somewhat esoteric implementation, but I hope I’ve inspired more implementations like this. Compute-based accurate renderers will hopefully spread to more systems that have difficulties with accurate rendering. I think it’s a very interesting topic, and it’s a fun take on emulation that is not well explored in general.